Europe Takes Bold Step with New AI Law! Addressing both profits and ethical challenges, the EU introduces groundbreaking legislation. By categorizing AI applications based on potential risk, the aim is to ensure proper regulations, highlighting the importance of a human-centric approach in this era of technological advancement.

"The AI Act is a starting point for a new model of governance built around technology," says European Parliament member Dragos Tudorache, emphasizing the EU's leadership in globally addressing AI challenges.

EU Officially Adopts Groundbreaking AI Law

Last Wednesday (13/03/2024), lawmakers of the European Union approved the AI law, which has been in preparation for years and will apply to all 27 member countries.

Some provisions will take effect later this year. It is expected that this AI legislation will serve as universal guidelines for other countries seeking to regulate rapidly advancing technology.

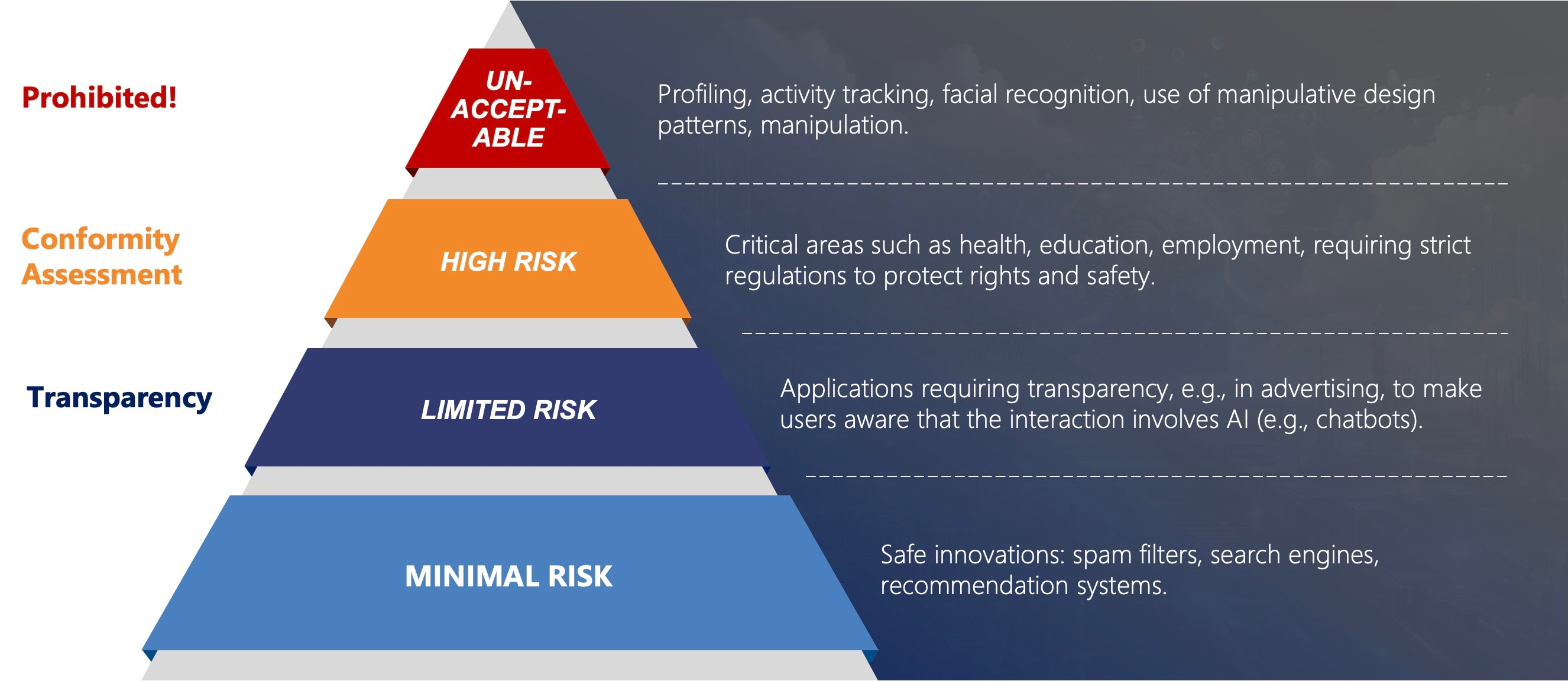

Understanding the Four Risk Levels in the EU AI Act

The AI law in the EU follows the principle of risk proportionality: the higher the risk associated with an AI application, the stricter the requirements. Most AI systems, such as anti-spam filters, are considered low-risk, and their regulation is voluntary. High-risk applications, such as those in medicine or critical infrastructure, must meet stringent criteria, such as using high-quality data. Certain AI applications, deemed unacceptably risky, such as social scoring systems or predictive police patrols, are prohibited. Bans also apply to the use of facial biometric recognition by the police in public spaces, with exceptions for serious crimes.

Application Requirements Under the New AI Regulations

- - Prohibited: Clearly defined as illegal under established legal regulations.

- - Conformity assessment requires AI applications to undergo a verification process confirming that they meet specific safety and ethical standards.

- - Transparency means that users must be informed about how these applications work, what data is used and for what purpose, aiming to increase trust and understanding of AI technology.

- - For applications at the first level of risk, requirements are minimal and primarily focus on basic ethical principles based on safety.

How EU Law Addresses Generative AI

Initial versions of the EU law focused on AI for specific tasks, such as CV analysis. However, the dynamic development of generative AI models, like ChatGPT, prompted policymakers to update the regulations.

Key Provisions in the Updated AI Legislation

Introduced rules for generative AI models, such as chatbots, creating realistic content. Creators, including startups and giants like OpenAI or Google, must disclose the sources of data (texts, images, videos) used to train AI and adhere to copyright laws. AI content imitating real individuals, places, or events must be clearly labeled as manipulated.

Addressing Systemic Risks in Large AI Models

Special attention has been focused on large AI models that may pose "systemic risk," including GPT-4 by OpenAI and Gemini by Google. The EU is concerned about the possibility of serious accidents or cyberattacks, as well as the spread of harmful stereotypes by these systems.

Ensuring Responsibility and Security in AI Applications

Providers of systems must assess and minimize risks, report serious incidents, implement cybersecurity measures, and disclose energy consumption by their models. These regulations aim to keep pace with AI advancements, safeguarding society from potential threats.

Global Implications of Europe's AI Regulations

The European Union's Role in AI Governance

Europe, by proposing AI regulations as early as 2019, became a pioneer in global regulation of new technologies. Brussels stands out in introducing standards for rapidly developing sectors, inspiring other countries to take similar actions.

International Efforts in AI Regulation

- - In the USA, President Joe Biden issued a comprehensive executive order on AI aimed at establishing international standards and regulations. At least seven states in the USA are working on their own AI regulations, indicating a growing interest in regulating this field.

- - President Xi Jinping of China introduced the Global AI Governance Initiative, promoting fair and safe AI utilization. China has also implemented "temporary measures" regulating AI generating texts, images, sounds, and videos, aimed at its citizens.

- - Simultaneously, on the international stage, from Brazil to Japan, and global organizations like the UN and G7, efforts are underway to create protective frameworks for AI, demonstrating the global scope and importance of regulatory initiatives in this field.

The European regulatory initiative thus has a significant global impact, prompting other countries to reflect on their own regulations regarding artificial intelligence.

Timeline for Implementation of the New AI Law

The AI law is expected to be formally adopted in May or June, following the completion of procedures, including approval by EU member states. The regulations will gradually take effect, with an initial ban on certain AI systems starting six months after the law is enacted.

Regulations for general use AI, such as chatbots, will come into effect one year after the law is implemented, with a full set of rules for high-risk AI by mid-2026. EU countries will establish supervisory bodies for AI-related complaints, and Brussels will establish an AI Bureau to enforce regulations.

Penalties and Consequences for Non-Compliance with AI Regulations

Violators may face fines of up to €35 million or 7% of the company's global revenue. Italian lawmaker Brando Benifei suggests that the regulations could be expanded after the upcoming elections to cover more aspects of AI, such as in the workplace.

Final Thoughts on Europe's AI Regulatory Revolution

In conclusion, the introduction of groundbreaking AI regulations marks a significant step forward for the European Union in balancing innovation with citizen protection. With meticulous attention to risk assessment and transparency, coupled with stringent penalties for non-compliance, these measures underscore the EU's commitment to fostering responsible AI development. As the global community increasingly grapples with the ethical implications of artificial intelligence, Europe's proactive approach sets a compelling precedent, inspiring nations worldwide to reflect on and refine their own AI policies.